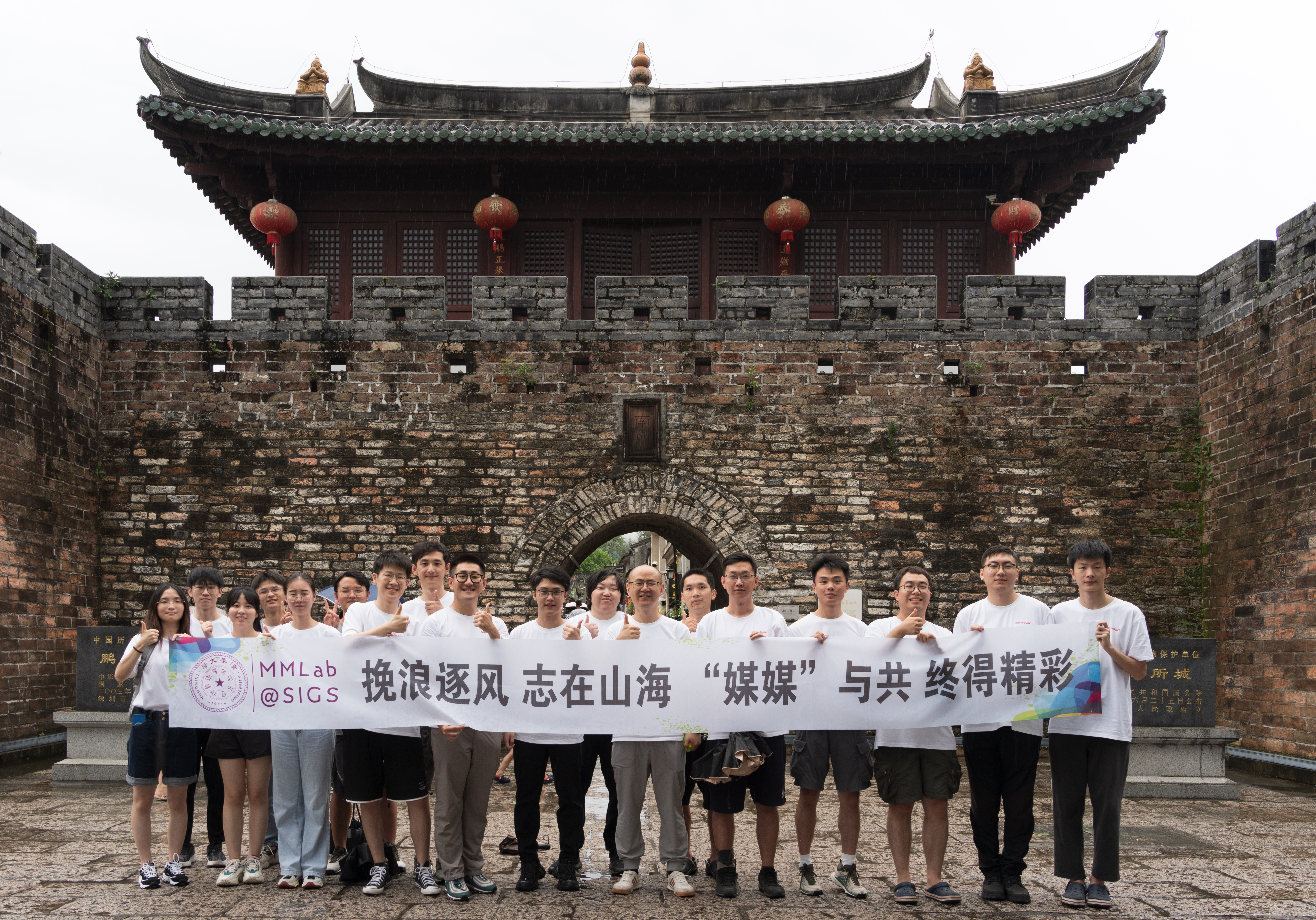

About 关于我们

MMLab@SIGS

We are a dynamic research collective at the forefront of artificial intelligence,

dedicated to solving the fundamental challenges of embodied intelligence. Our vision is to create

self-evolving agents capable of perceiving, reasoning, and acting in complex, real-world

environments—agents that continuously learn and adapt to seamlessly bridge the digital and physical

worlds.

Our mission is to establish a complete pipeline for these agents, forming a closed loop of efficient

data preparation, multimodal perception, spatiotemporal decision-making, and continuous learning. By

integrating cutting-edge research in 3D vision, world models, and large language models, we are

building the foundation for the next generation of intelligent systems.

We are always looking for motivated PhD students, postdocs, and research assistants who share our

vision. Check out the Join

Us section and follow us on

Wechat.

我们是一个走在人工智能前沿的充满活力的研究集体,致力于解决具身智能的根本挑战。我们的愿景是创造能够在复杂的现实世界环境中感知、推理和行动的自我进化智能体——能够持续学习和适应,无缝连接数字与物理世界的智能体。

我们的使命是为这些智能体建立一个完整的创建流程,形成高效数据制备、多模态感知、时空决策和持续学习的闭环。通过整合3D视觉、世界模型和大型语言模型领域的最前沿研究,我们正在为下一代智能系统奠定基础。

我们随时欢迎有共同愿景的博士生、博士后和研究助理加入我们。请查看我们的加入我们栏目并关注我们的微信公众号。